Shann Holmberg

Head of Product

Insights, strategies, and real-world playbooks on AI-powered marketing.

MAY 6, 2026

Codex Passed Claude Code on npm: What the Spike Really Means

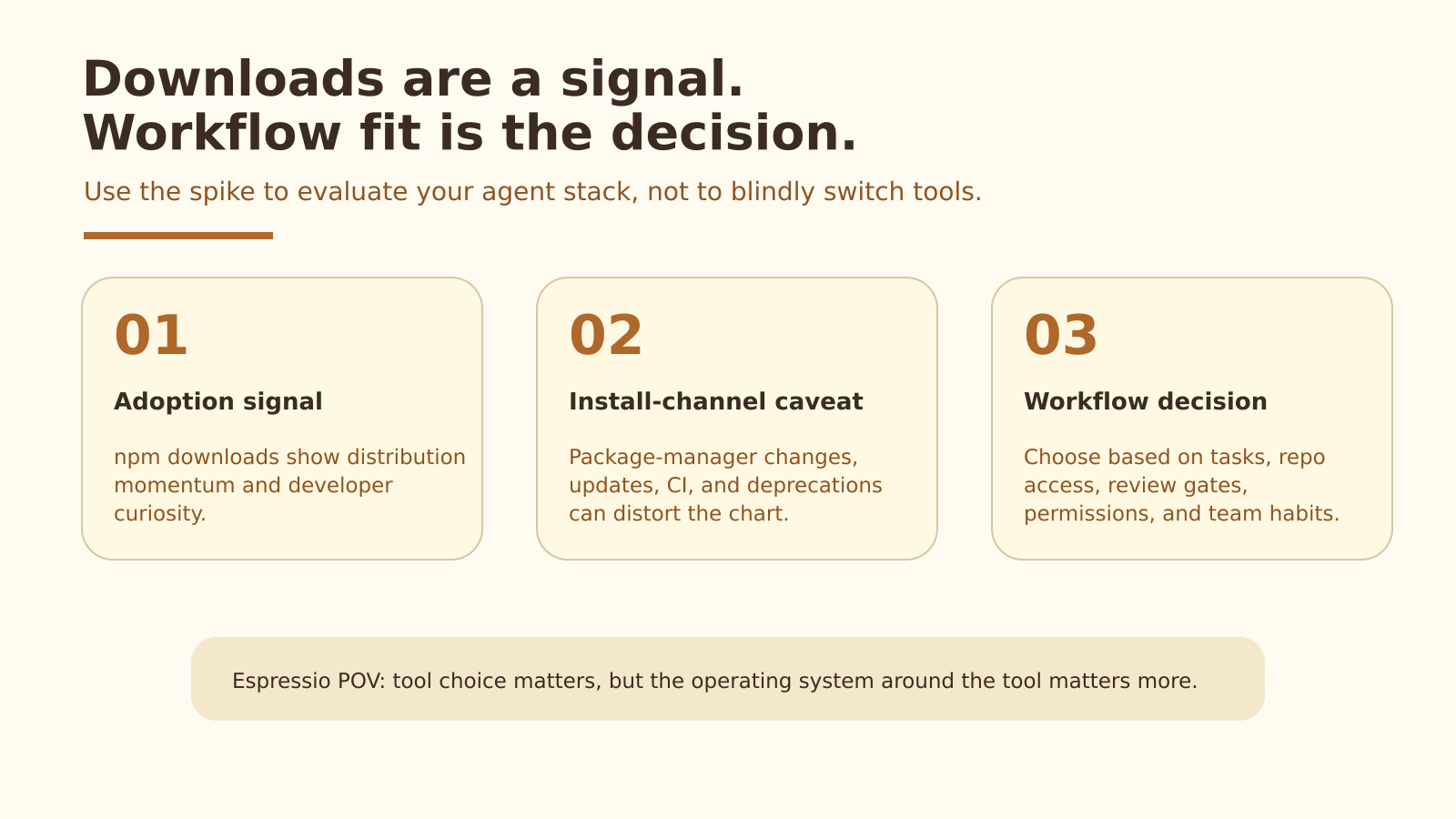

A Reddit thread in r/codex pointed out a striking shift: OpenAI Codex appears to have overtaken Claude Code on npm downloads. That makes for a good headline, but it should not become the whole strategy.

For teams comparing Codex vs Claude Code, the useful question is not which tool won the npm chart this week. It is which AI coding agent can fit safely inside the team’s real development workflow, with the right permissions, review gates, and success metrics.

Key takeaways

- Codex is ahead of Claude Code on npm downloads, based on npm API data collected on 2026-05-06 UTC.

- The signal is meaningful, but noisy, because Claude Code now lists npm installation as deprecated.

- Teams should compare Codex vs Claude Code by workflow fit, review quality, task routing, permissions, and AI agent adoption pilot outcomes.

What changed in the Codex vs Claude Code npm data?

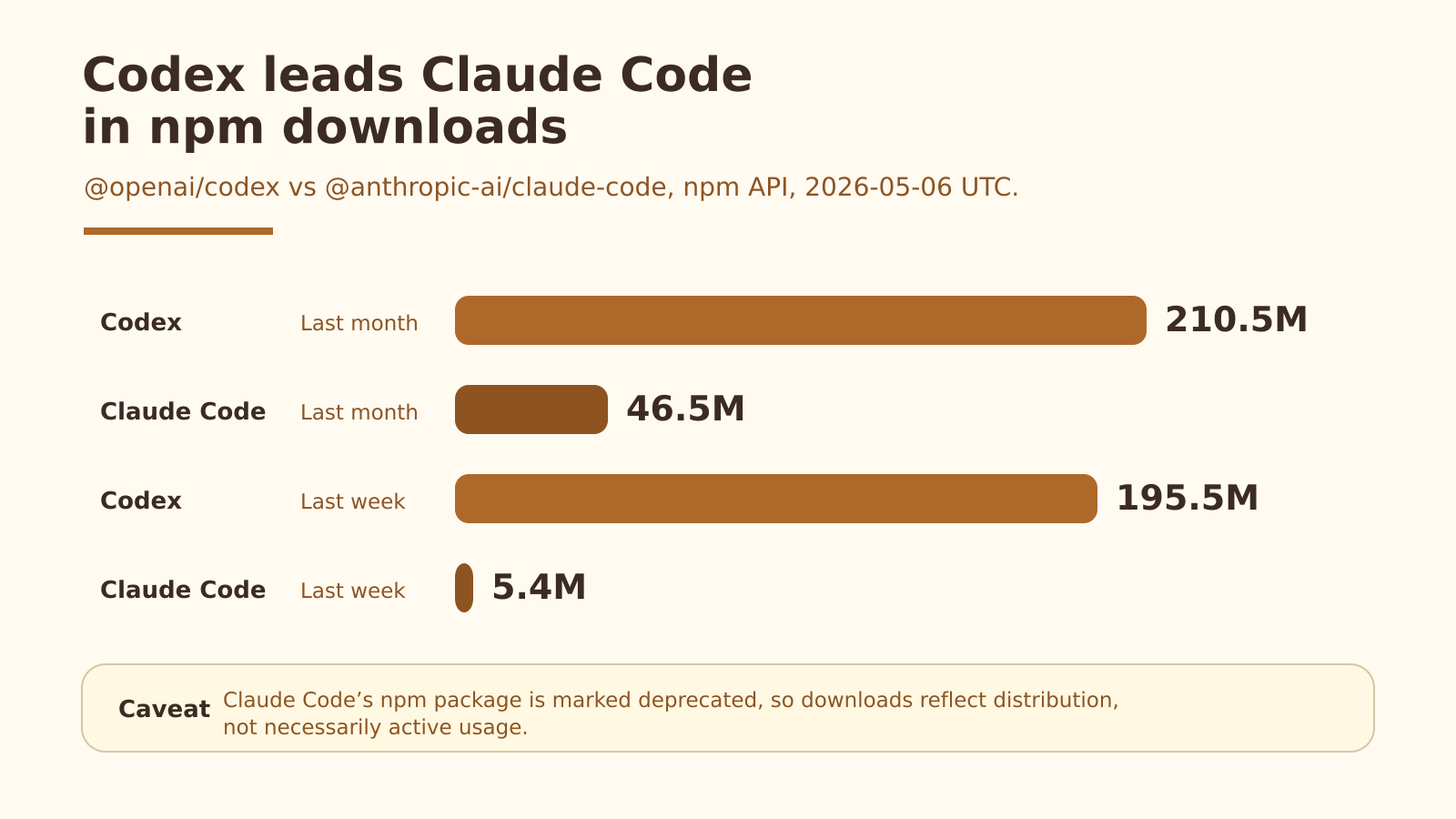

Codex is currently ahead of Claude Code on npm downloads. Using the npm public downloads API on 2026-05-06 UTC, @openai/codex showed about 210.5 million last-month downloads, while @anthropic-ai/claude-code showed about 46.5 million.

That is the headline signal. Codex was roughly 4.5x ahead over the last-month window. It also showed a much larger last-week figure, with about 195.5 million downloads compared with about 5.4 million for Claude Code.

Those numbers are worth tracking because npm downloads can reveal distribution momentum. They show that Codex is getting pulled into more developer environments through a familiar installation path.

Codex has overtaken Claude Code on npm downloads, but install-channel changes make the signal noisy.

Are npm downloads a reliable adoption signal?

npm downloads are useful, but they are not a clean measure of real AI coding agent adoption. They can show install activity, curiosity, package-manager momentum, and distribution strategy. They do not prove daily usage, retention, output quality, or whether generated code survives review.

The caveat matters here because Claude Code’s own README now says npm installation is deprecated. Its recommended install paths include curl, Homebrew, PowerShell, and WinGet. Codex, by contrast, still promotes npm as a primary global install method.

That means this comparison is partly measuring install-channel strategy. A tool can look weaker on npm because users moved to another install path, not because the tool lost all developer mindshare.

A few things can distort npm download data:

- package-manager preference

- automated updates

- CI installs

- mirrors and cache behavior

- deprecated install paths

- repeated installs across machines or environments

So yes, Codex overtaking Claude Code on npm is a real distribution signal. No, it is not proof that Codex is better for every team.

Downloads can show momentum. They do not prove daily team usage.

What does the Codex spike probably mean?

The Codex spike probably means OpenAI has a strong distribution advantage. Codex can be introduced through the terminal, IDEs, Codex Web, the Codex app, and the broader ChatGPT ecosystem. For teams already using OpenAI, the path from curiosity to trial is short.

That convenience matters. AI coding agent adoption often starts with one developer trying the tool, sharing an example, and creating enough internal proof for others to test it.

When distribution is easy, experimentation speeds up. When experimentation speeds up, teams get more examples to evaluate. Those examples become the basis for procurement, workflow design, policy, and internal enablement.

That is why the npm spike matters. It suggests Codex is entering more developer environments, even if the chart does not tell us how deeply teams use it.

What does the spike not prove?

The spike does not prove Codex is the better AI coding agent in every situation. It does not prove Claude Code usage is shrinking across all channels. It does not measure serious repo work, retention, review burden, escaped bugs, or team-level productivity.

That distinction matters because tool races are easy to overread. Codex vs Claude Code is a real comparison, but the winner inside a company is rarely the tool with the strongest public chart.

The winner is the agent that fits the operating model. That means the right tasks, the right context, the right permissions, and the right review path.

A team can install an agent quickly and still fail to use it well. A team can also use a less-hyped tool successfully because it fits a mature workflow.

Expert perspective: Tim Haldorsson on Codex adoption

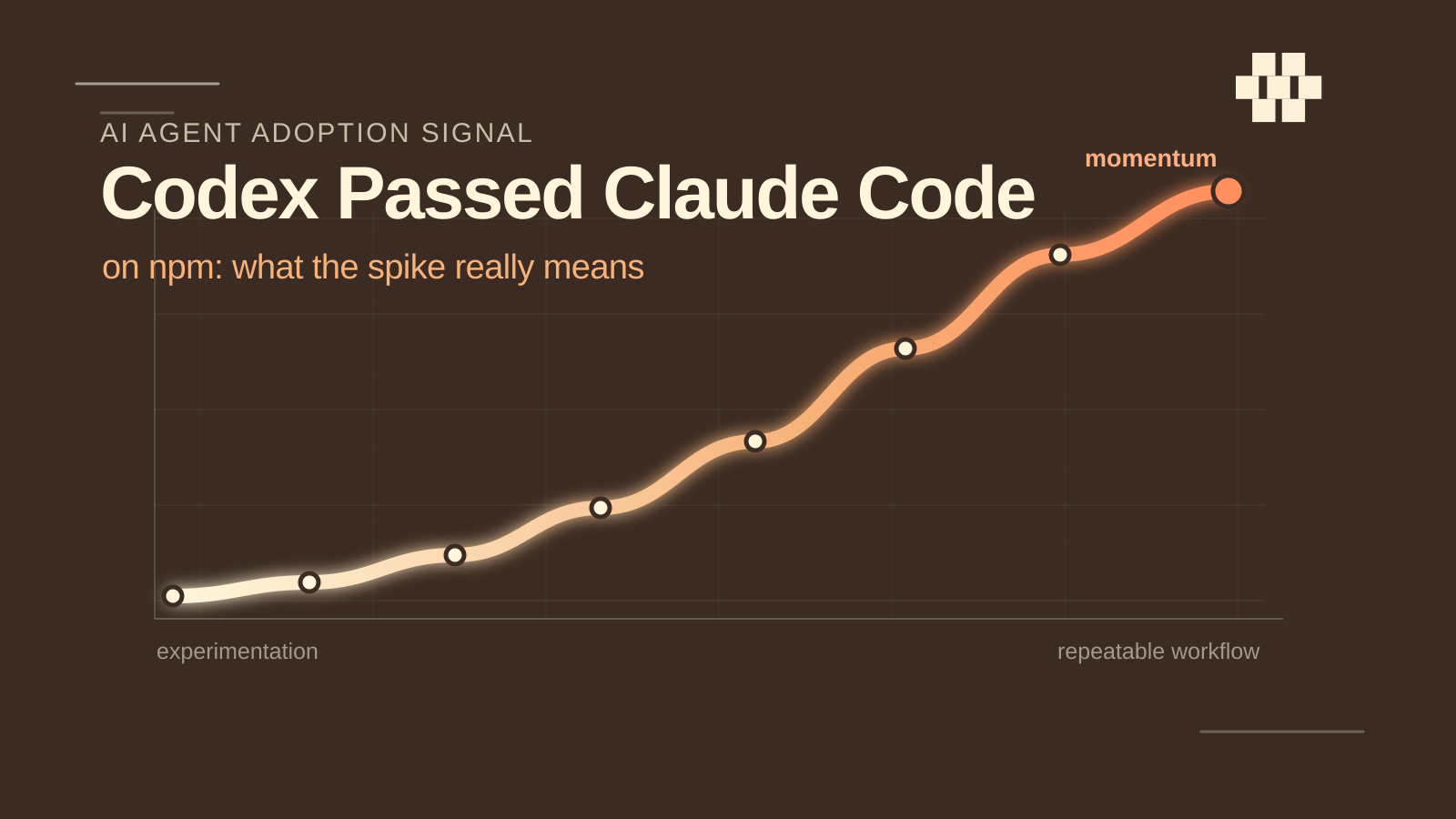

Tim Haldorsson, OpenAI Ambassador in Portugal and member of the Espressio team, sees the npm spike as a useful signal, but not as the whole story.

“The npm spike is a useful signal because it shows how quickly developers are experimenting with Codex. But downloads are only the first step. The real test is whether teams can turn that experimentation into a repeatable workflow with clear tasks, permissions, and review gates.” - Tim Haldorsson, OpenAI Ambassador in Portugal and Espressio team member

For teams comparing Codex and Claude Code, Haldorsson says the workflow matters more than the chart.

“When teams compare Codex and Claude Code, I would not start with the chart alone. I would start with the workflow: where the agent runs, what it is allowed to change, how humans review the output, and whether the team can measure improvement over the current process.” - Tim Haldorsson, OpenAI Ambassador in Portugal and Espressio team member

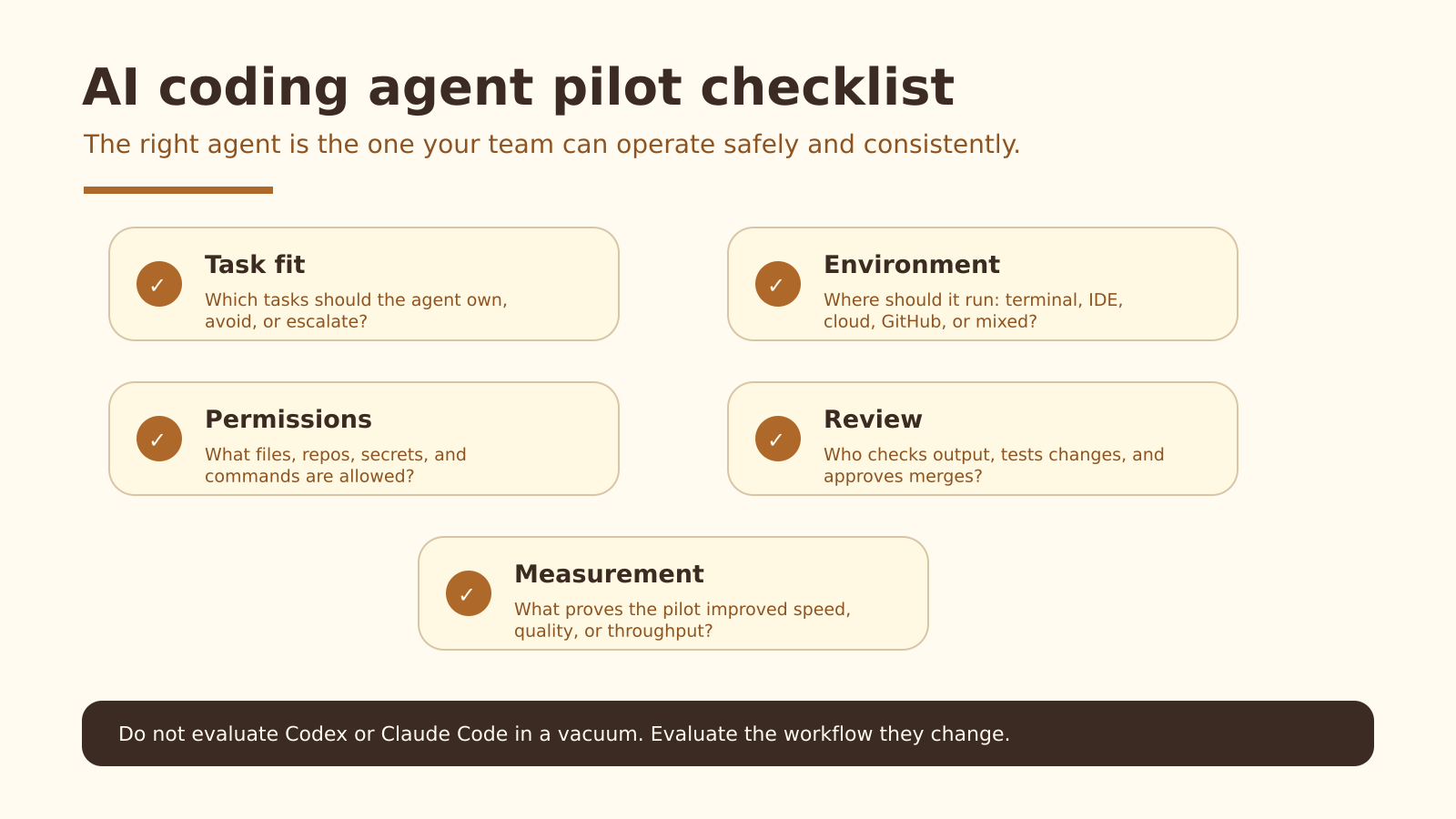

How should teams compare Codex vs Claude Code?

Teams should compare Codex vs Claude Code through workflow fit, not a feature checklist alone. The best pilot asks which agent helps the team complete specific work with less friction, fewer mistakes, and review gates that people actually trust.

A practical comparison should cover five areas.

1. What tasks should the agent own?

Good agent tasks tend to have clear boundaries: boilerplate, test scaffolding, documentation updates, small refactors, migration chores, and isolated bug fixes.

Riskier tasks need tighter controls. Architecture changes, auth flows, payments, data migrations, security-sensitive logic, and quality-critical product work should not be handed to an agent without stronger review.

2. Where should the agent run?

A terminal-first workflow is different from an IDE workflow. A cloud delegation workflow is different from local pair-programming. A GitHub-integrated workflow is different from a founder asking an agent to prototype a feature.

The environment changes the permission model, review process, and failure modes.

3. What permissions does it need?

An AI coding agent should not automatically inherit every permission a developer has. Teams need explicit rules for repo access, file access, command execution, secrets, production data, package installation, and external API calls.

The more autonomy an agent receives, the more important this layer becomes.

4. How will humans review the output?

Agent output needs a clear review path. That might include tests, linting, type checks, staged diffs, code review, sandbox runs, security checks, or approval before merge.

Without review gates, teams end up trusting work because it looks plausible. Plausible is not the same as correct.

5. How will you measure the pilot?

A good pilot needs a definition of success. Measure cycle time, review burden, escaped bugs, test coverage, developer satisfaction, and the percentage of agent output that survives review.

If the agent creates more review work than it saves, the workflow is not working yet.

The right AI coding agent is the one your team can operate safely and consistently.

Should teams switch from Claude Code to Codex?

Teams should not switch from Claude Code to Codex because of npm downloads alone. The download shift is a reason to investigate Codex, run a structured pilot, and compare workflow outcomes. It is not a migration plan.

Teams should consider testing Codex if they already work inside the OpenAI ecosystem, want to experiment with Codex Web or multi-surface workflows, or need a simpler path for engineers and non-engineers to collaborate around coding tasks.

Teams should be slower to switch if Claude Code is already embedded in their workflows, developers have strong habits around it, or the team has already built prompts, commands, plugins, and review processes around Claude Code.

It may also be reasonable to use both. One agent might fit local repo work. Another might fit delegated tasks, prototypes, or specific coding chores. The key is to define routing rules instead of letting every person choose tools randomly.

Espressio POV: design the workflow around the agent

The Codex download spike is a useful moment because it shows how quickly the AI coding agent market is moving. But teams do not get value from AI agents by chasing every spike. They get value by designing the system around the agent.

That system includes task selection, review gates, permissions, handoff, documentation, measurement, and clear rules for when a human takes back control.

At Espressio, we think about AI agents this way: not as isolated tools, but as parts of a working AI operating system. The tool matters. The model matters. But the operating system around the agent is what determines whether the workflow becomes useful, safe, and repeatable.

If your team is testing Codex, Claude Code, or a mixed AI coding agent stack, Espressio can help design the workflow around the tools: what agents should own, how humans should review them, and how to turn experiments into reliable operating systems.

Frequently Asked Questions

Did Codex overtake Claude Code?

By npm downloads, yes. In the npm data collected on 2026-05-06 UTC, @openai/codex was ahead of @anthropic-ai/claude-code over both the last-month and last-week windows.

Are npm downloads a reliable measure of AI coding agent adoption?

They are useful, but incomplete. Npm downloads can show distribution momentum and install activity, but they do not prove daily active usage, retention, output quality, or production impact. In this case, the signal is especially noisy because Claude Code now lists npm installation as deprecated.

Is Codex better than Claude Code?

Not universally. Codex may be a better fit for teams already using OpenAI and wanting multiple surfaces for agent work. Claude Code may remain a better fit for teams with strong local terminal workflows and existing habits around Claude. The better choice depends on workflow fit.

Should my team switch from Claude Code to Codex?

Not based on download data alone. Treat the download shift as a reason to run a structured pilot, not as a reason to migrate immediately.

Can teams use Codex and Claude Code together?

Yes. Some teams may use different agents for different jobs. The important part is to define routing rules: which agent handles which task, what permissions it gets, and how the output is reviewed.

What is the best way to pilot an AI coding agent?

Pick a narrow task class, define success metrics, set permission boundaries, require review gates, and compare the agent workflow against your current process. A pilot should prove that the agent improves the workflow, not just that it can generate code.

Source notes

- npm public downloads API for

@openai/codex, retrieved 2026-05-06. - npm public downloads API for

@anthropic-ai/claude-code, retrieved 2026-05-06. - OpenAI Codex GitHub repository and README, retrieved 2026-05-06.

- Anthropic Claude Code GitHub repository and README, retrieved 2026-05-06.

- Reddit discussion in r/codex, retrieved 2026-05-06.